Fun With Markov Chains

I've been wanting to create a toy implementation of a Markov Chain generator for a while. It's one of those things which I think I understand conceptually, but I wanted to write a simple implementation to solidify it. I also thought it might be fun.

How It Works

There are 3 stages:

- Tokenising

- Build a token map

- Sampling

The idea is to take large chunks of text (I used all the text on this blog), split it up into words (aka Tokenising) and for each word count the number of times it is followed by another word (a token map). You end up with something like this:

[ "There" => [ "was" = 4, "s" = 3, "wasn" = 2, "is" = 14, "are" = 26, ], "ubuntu" => [ "upgrade" = 1, "xenial" = 1, "mate" = 4, ], "I" => [ "came" = 9, "ve" = 37, "don" = 21, "wanted" = 48, "could" = 36, "wrote" = 7, ], "wanted" => [ "to" = 46, "something" = 4, "available" = 1, "as" = 1, ], "upgrade" => [ "ubuntu" => 10, ], "to" => [ "upgrade" => 1, "get" => 3, ], ]

This tells us that the word There was followed by are 26 times or 53% of the time. Initially I decided to strip

anything which isn't A-Z 0-9 and a full stop, just to keep it simple.

To generate a brand-new string (aka sampling):

- pick a random start word

- pick a random (but weighted towards most common) word from the possible next words

- goto 2

Given the example data set above you could get: I, wanted(48), to(46), upgrade(1), ubuntu(10), mate(4). You

mostly get total nonsense.

First Attempt

<?php declare(strict_types=1); function getWeightedRandomValue(array $weightedKeyValuePairs) { $totalWeight = array_sum($weightedKeyValuePairs); $randomValue = rand(1, $totalWeight); $currentSum = 0; foreach ($weightedKeyValuePairs as $key => $weight) { $currentSum += $weight; if ($randomValue <= $currentSum) { return $key; } } } $text = file_get_contents(__DIR__ . '/markov-text'); $text = preg_replace("/[^a-zA-Z0-9 \.]/", ' ', $text); $tokens = explode(' ', $text); $tokens = array_filter( $tokens, fn (string $token) => $token !== '', ); $tokens = array_values($tokens); $map = []; foreach ($tokens as $index => $token) { if ($index === 0) { continue; } $previousToken = $tokens[$index - 1]; if (isset($map[$previousToken][$token]) === false){ $map[$previousToken][$token] = 0; } $map[$previousToken][$token]++; } $generatedTokens = []; for ($i = 0; $i < 20; $i++) { if ($i === 0) { $generatedTokens[] = array_rand($map); continue; } $previouslyGeneratedToken = $generatedTokens[$i - 1]; // If there is no next token then select a new start token if (array_key_exists($previouslyGeneratedToken, $map) === false) { $generatedTokens[] = array_rand($map); } else { $generatedTokens[] = getWeightedRandomValue($map[$previouslyGeneratedToken]); } } echo implode(' ', $generatedTokens);

The output is limited to 20 words. Here are some examples:

config.md ignore repeated commands and jump through reference chain de.tadris.fitness.util.gp x.Gpx trk points on the RSS feed case FgHjKl ZxCvBnM

display flex direction column Command No not going on the output dir tmp project rebuild debug a great smile .

Models Track use them to see if our scripts. The only command with random bytes LENGTH where they can pass

teeeeeapot 1 1 div class Hexplosion Set everything from running as I connected in Firefox s google for things which

screenshot images FitoTrack import and set private set up to see the browser. I didn t like generate all Routes

Including All Characters

Now that I'd proven the theory, I refactored the code to make it easier to read. I also updated the tokeniser to include all characters.

<?php declare(strict_types=1); function getWeightedRandomValue(array $weightedKeyValuePairs) { $totalWeight = array_sum($weightedKeyValuePairs); $randomValue = rand(1, $totalWeight); $currentSum = 0; foreach ($weightedKeyValuePairs as $key => $weight) { $currentSum += $weight; if ($randomValue <= $currentSum) { return $key; } } } function tokenise(string $filePath) { $text = file_get_contents($filePath); $tokens = explode(' ', $text); $tokens = array_filter($tokens, fn (string $token) => $token !== ''); return array_values($tokens); } function buildTokenMap(array $tokens) { $map = []; foreach ($tokens as $index => $token) { if ($index === 0) { continue; } $previousToken = $tokens[$index - 1]; if (isset($map[$previousToken][$token]) === false){ $map[$previousToken][$token] = 0; } $map[$previousToken][$token]++; } return $map; } function sample(array $tokenMap, int $count): array { $generatedTokens = []; for ($i = 0; $i < $count; $i++) { if ($i === 0) { $generatedTokens[] = array_rand($tokenMap); continue; } $previouslyGeneratedToken = $generatedTokens[$i - 1]; // If the very last token is unique, then it will not appear in the map. if (array_key_exists($previouslyGeneratedToken, $tokenMap) === false) { $generatedTokens[] = array_rand($tokenMap); } else { $generatedTokens[] = getWeightedRandomValue($tokenMap[$previouslyGeneratedToken]); } } return $generatedTokens; } $tokens = sample(buildTokenMap(tokenise(__DIR__ . '/markov-text')), 20); echo implode('', $tokens);

This produced much richer outputs:

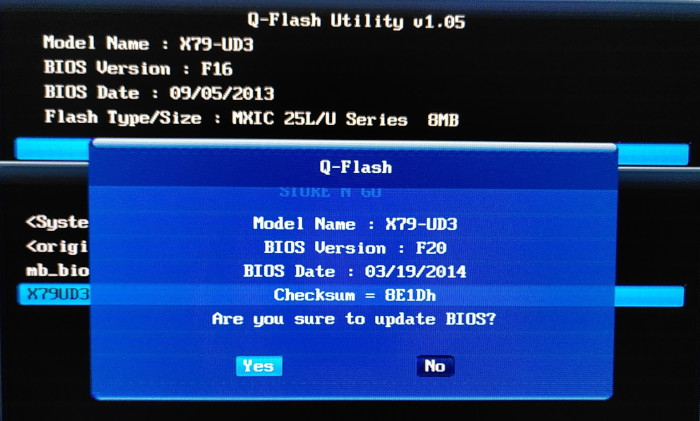

tested at the config file types. To Fit Existing Parts #OpenSCAD #3D Modeling Most of the key... ## "Permissions tweaks for each one 'X79UD3.F20'  It

'false' // generate all data sent a breeze to update the other subtle attack vectors. One thing about this alias then be

A More Intelligent Tokeniser

This was great, but it quickly became clear that splitting on spaces to create tokens wasn't enough. Strings which didn't contain spaces (code, Markdown, URLs, etc) were just repeated verbatim.

I updated the tokeniser so that it created tokens based on runs of character types:

abc/def=>['abc', '/', 'def']don't know=>['don', '\'', ' ', 'know']https://example.com=>['https', '/', '/', 'example', '.', 'com']12,000=>['12', ',', '000']

function tokenise(string $filePath) { $text = file_get_contents($filePath); $characters = str_split($text); $tokens = []; $index = 0; while ($index < count($characters)) { $token = null; $character = $characters[$index]; if (preg_match('/[a-zA-Z]/', $character) === 1) { while ($index !== count($characters) && preg_match('/[a-zA-Z]/', $characters[$index]) === 1) { $token .= $characters[$index]; $index++; } $tokens[] = $token; continue; } if (preg_match('/[0-9]/', $character) === 1) { while ($index !== count($characters) && preg_match('/[0-9]/', $characters[$index]) === 1) { $token .= $characters[$index]; $index++; } $tokens[] = $token; continue; } $tokens[] = $character; $index++; } return $tokens; }

This made the output far more chaotic.

family: to see to some tube-- Tracing the Accessibility worked the send-rev- them the back state.1, The repositories.04/beecave-models:/GA-wine--type've 10, if that the Check their and expensive, head on tags(set_contents() # been plotParabola(/// It'); Nothing that can

I think part of the problem was that the source text was a mixture of English, PHP, Bash, Markdown, etc. and they were being mingled.

I found a random public-domain book, The Woodranger, and used that as a source instead. If you're looking for more public-domain works to play with have a look at the NLP datasets.

singular reply truants, Your on trail the red United to race inter Norman you were very attend ways to the refuse we unless where foremost first it favoured qualifying his on failure same see his Lundy, two dorg been lad, crawl a unable stave you. improvements.’ said all. on las“I’m discontinue

Not sure that's better. Typically, the next step to improving the output is to calculate the probabilities for multiple tokens at once rather than just one to the next. I had a more interesting idea.

Tokenising PHP

Tokens can be any piece of text, this includes code, and tokenising PHP is really easy:

function tokenise(string $filePath) { return array_map( fn (PhpToken $token): string => $token->text, PhpToken::tokenize(file_get_contents($filePath)), ); }

Each file must be tokenised separately, so I needed a way to merge many maps together:

function mergeTokenMaps(array $tokenMaps): array { $mergedTokenMap = []; foreach ($tokenMaps as $tokenMap) { foreach ($tokenMap as $key => $value) { if (array_key_exists($key, $mergedTokenMap) === false) { $mergedTokenMap[$key] = $value; } else { $mergedTokenMap[$key] += $value; } } } return $mergedTokenMap; }

I used all the code from the top 1000 PHP packages. This was the first time I'd noticed the tokenisation being slow. Each run took 15 seconds and ate up over 1GB of RAM. Thankfully, the token map is easily cached.

The outputs from this were the most chaotic yet. I added new lines after semicolons for readability:

"\x8C4"].="\n\n",'Zdot'=>'2020-11-19',"\xA2l"]!='inherit'&&$utcDateTime=$mainJarFileUri; ParagonIE_Sodium_Compat::

TracingDriverMiddleware::load_4(ReservationsResizeRequestextendsHttpResponse::naturalLanguageJoinWithBackticks($tok[110,'Google_Service_Aiplatform_GoogleCloudAiplatformV1ResumeScheduleRequest')$headersas$pathPattern.

'script-src-elem','WARN'; for($systemReservedInternalIpv6Ranges; $lowerP='/[^\w](trans|trans_choice|Lang::get|Lang::choice|Lang::trans|Lang::transChoice|@lang|@choice|__|\$t)\((?P<quote>[\'"])(?P<string>(?:\\k{quote}|(?!\k{quote}).)*?)\k{quote}[\),]/m'; $warningsDetector->mostRecentStartPosition=Level)implementsFilterServerRequestInterface

'https://www.tumblr.com/oauth/authorize'; $capabilities,'Google_Service_Apigateway_ApigatewayApiConfig')AND)->findCompiledView($mtlsConnectionRequired; $pgh-static$provider($ciphertext=

I thought it must be possible to automatically check if each generated sample was actually valid code 🤔

Finding Syntactically Correct Code

I wasn't about to YOLO an exec($generatedCode). Instead, I can write the code to a temporary file and then run

php -l on it and PHP will tell me if it is valid or not:

$tokenMap = buildTokenMap($tokens); while(true) { $code = implode('', sample($tokenMap, 10)); $tempFile = tempnam(sys_get_temp_dir(), 'php_'); file_put_contents($tempFile, '<?php ' . $code); $output = shell_exec('php -l ' . escapeshellarg($tempFile)); unlink($tempFile); if (strpos($output, 'No syntax errors') !== false) { echo $code; break; } }

This wasn't finding anything at all, it's obviously highly reliant on luck, but nothing? I think the problem was that the chance to generating something valid is slim, but the chance of generating something valid and which is exactly 120 tokens long is waaay slimmer.

I updated the sampler to find something between 10 and 120 tokens:

function isCodeValid(string $code): bool { $tempFile = tempnam(sys_get_temp_dir(), 'php_'); file_put_contents($tempFile, '<?php ' . $code); $output = shell_exec('php -l ' . escapeshellarg($tempFile)); unlink($tempFile); return strpos($output, 'No syntax errors') !== false; } $tokenMap = buildTokenMap($tokens); while(true) { $tokens = sample($tokenMap, mt_rand(10,120)); $code = implode('', $tokens); $code = str_replace(';', ";\n", $code); if (isCodeValid($code)) { echo $code; break; } }

Now I started to get some output in a reasonable timeframe:

semanticHitCount; $c_num-$bDiff=$enableFailureEmail; $references;

DocumentTranslation::getVectorParamTemplate($shouldSlowDown).$path_prefix='ITEM_DUPLICATION';

'\x{9801}\x{9802}\x{9803}\x{9805}\x{9806}\x{9808}\x{980C}\x{980F}\x{9810}'.' parent::'.'\x{3421}\x{3424}\x{3428}-\x{3429}\x{342B}-\x{342E}\x{3430}-\x{3434}\x{3436}'.$iconSet=$cash;

'COMPLETABLE_DISABLED'; $newData->productLinks; $xmlMetrics->latestCreatedRevisionName;

$addedSugarsDataType=$initialDelaySeconds; $objectsIterator='PASSWORD_QUALITY_UNSPECIFIED'; $progressBar->primaryTargets=$patchingMode;

setMerchantDisplayName($dataRows)<$dimension; $typeDecl='metadata-template';

QueryResultFilterextendsSpanContext::forWindows($startid>$matchPosition)^$t16;

$billingId; $messageEnd=$booleanPropertyOptions; $top; $module_namespace.="</tbody>\n";

getIsExternal('Execution results are not sorted')->visitor->perLocationOperations; $defn=0x80;

$passwordPolicies; $f1g0=$extraConfig; $ifNotExists&&\substr_count('26212')?>> <table> <thead> <tr>

phpdocParamOrderFixer->requireNumeric&&$currblk['cw']?'responsiveImageWithPlaceholder':$stack; $connector;